The hidden costs of standing still: A case for purposeful modernization

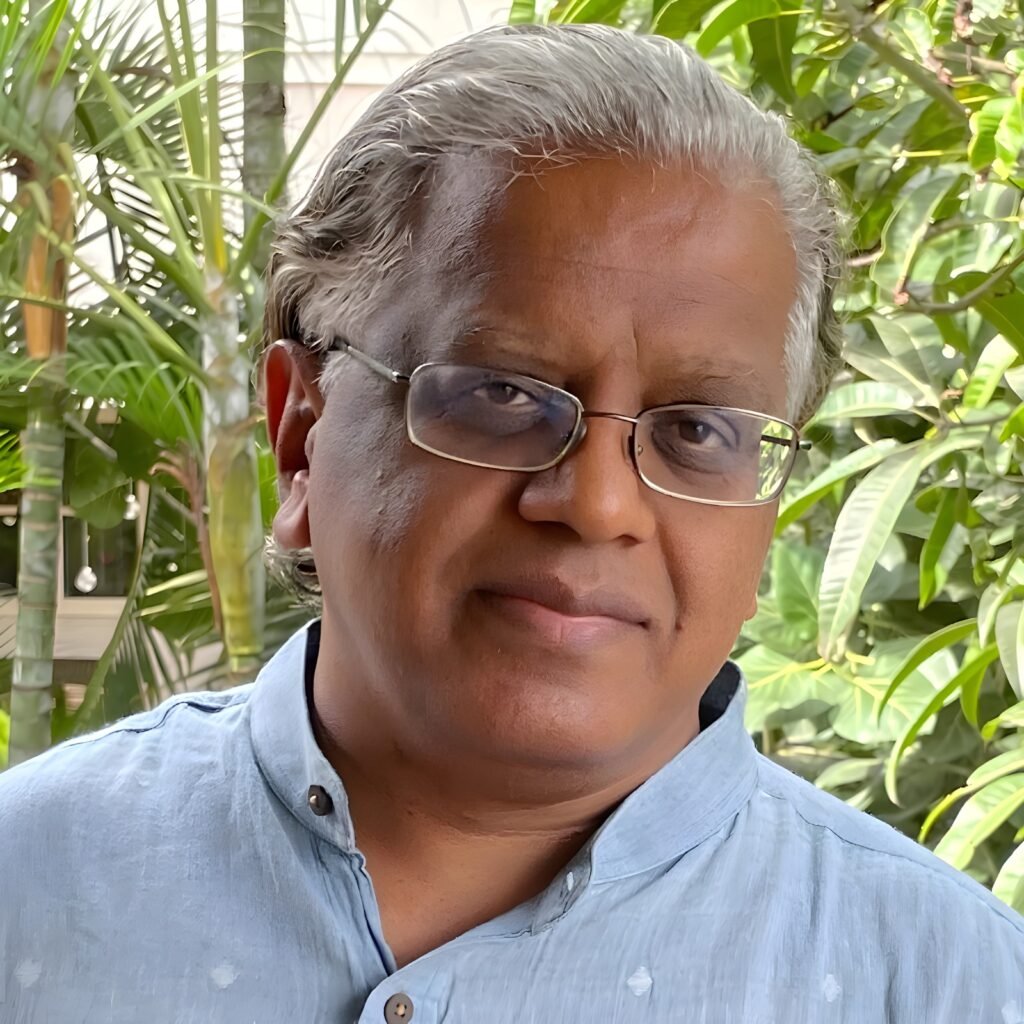

Technology modernization is rarely as clean as it looks on a roadmap. Amit Patil, MD & Founder, CynalitX Consulting LLP, has thought deeply about what actually makes these programmes succeed or fail, from the weight of decades-old systems and the people who depend on them, to the cloud strategies and risk disciplines that determine whether a transformation holds together under pressure.

His views are direct and grounded in what he has seen work and what he has seen quietly derail otherwise well-planned efforts.

Technical debt is only half the problem

The most significant challenge, in Patil’s view, is the sheer complexity that accumulates over decades. Legacy systems were not built with modularity in mind. They are deeply interdependent, often undocumented, and critical to daily operations. You cannot simply switch them off. Organizations end up running parallel environments, which drives up both cost and cognitive load at the same time.

But he is equally clear that the human side is just as hard. Teams that have worked on stable, familiar systems for years often see modernization as a threat to their expertise rather than a chance to grow. That makes change management as important as any architecture decision. Without real investment in upskilling and clear communication, even the best technical strategy will stall.

Data migration is where many organizations underestimate the work involved. Decades of operational data carries inconsistencies, deprecated schemas and compliance obligations that do not map cleanly onto modern platforms. Patil’s point is firm: getting data right is not a secondary concern. It is the foundation everything else is built on. Organizations that treat it as a final step almost always find it should have been the first.

Remove the friction and teams move faster

Legacy architecture creates invisible friction at every stage of development. Engineers spend too much time maintaining fragile integrations rather than building new things. When monolithic systems are replaced with modular, API-first platforms, that resistance largely disappears. Teams can develop, test and deploy features independently, cutting out the inter-team dependencies that historically stretched delivery timelines from weeks into months.

Cloud-native platforms remove provisioning bottlenecks that used to require procurement cycles and physical setup. What once took weeks now takes minutes. Patil uses a practical example to make the point: a product experiment that previously needed a business case and a full budget cycle can now be prototyped and validated within a single sprint.

There is also a compounding effect that is easy to overlook. Modern platforms attract modern engineering talent. Developers working with containerization, CI/CD pipelines and observability tools tend to move faster and produce higher quality output. Over time, that talent advantage becomes a durable competitive edge.

Multi-cloud is a strategy, not a fallback

Patil sees hybrid and multi-cloud strategies as having moved well past contingency planning. They are now a deliberate architectural choice. No single cloud provider is uniformly strong across every workload. By distributing across providers, organizations can match specific requirements to whoever is best placed to deliver, whether that is machine learning infrastructure, data sovereignty, or low-latency edge computing.

From a scalability standpoint, multi-cloud removes dependence on any single provider’s capacity limits or pricing model. Organizations can scale resources precisely where demand arises without overprovisioning. This is especially useful for businesses that deal with seasonal spikes or rapid geographic expansion.

The flexibility argument also holds from a risk angle. Vendor lock-in tends to be a hidden cost that only surfaces at renewal time or when a provider changes its terms. A hybrid architecture keeps negotiating leverage intact and ensures business continuity if any single environment runs into problems.

Risk is a continuous signal, not a periodic audit

Patil is clear that risk management in a large-scale transformation is an operational discipline, not a governance checkbox. The organizations that come through these programmes successfully are the ones that treat risk as something to monitor continuously, not review occasionally.

He favours incremental delivery over big-bang cutovers. Progressive migration, running legacy and modern systems in parallel and validating at each checkpoint, limits the damage when something goes wrong. Rollback planning needs to be in place from day one, not added later as an afterthought.

Cybersecurity deserves particular attention during transitions. When old and new environments are running alongside each other, the attack surface expands. Security architecture needs to keep pace with infrastructure changes, not lag behind. Regulatory and data compliance obligations do not pause during a migration either. They need to be mapped, validated and evidenced throughout the entire process.

AI, sustainability and the expanding edge

Looking at the next three to five years, Patil expects AI to move from being a feature layered onto modernized systems to being embedded in the modernization process itself. AI-assisted code migration, automated documentation and intelligent testing frameworks are already cutting the manual effort involved in legacy transformation. Over time, this will change the economics of modernization and make it viable for organizations that previously could not justify the investment.

Platform engineering will also mature. Instead of each product team assembling its own tools, organizations will invest in internal developer platforms that offer standardized, self-service infrastructure. This creates consistency, speeds up onboarding and frees engineering teams to focus on business problems rather than infrastructure configuration.

Sustainability is another factor that Patil expects to move from reputational concern to structural constraint. Regulatory pressure around energy consumption and carbon reporting will start shaping cloud architecture decisions directly. Organizations that do not account for infrastructure efficiency will face both compliance risk and cost exposure as energy pricing and carbon obligations tighten.

From legacy to cloud-native: Why modernization can no longer wait

The debate around legacy modernization has been going on for years, but Suresh Anantpurkar, Founder and CEO of Manch Technologies, thinks the window for hesitation is closing fast. As AI reshapes what enterprise technology needs to do, the gap between organizations running modern systems and those still holding on to old infrastructure is only going to widen. His perspective across infrastructure, cloud strategy, risk and the road ahead reflects someone who has thought carefully about where this is all heading.

Old systems, new problems

The core difficulty with moving from on-premise systems to modern digital platforms, according to Anantpurkar, is that the two were built in entirely different ways. Legacy systems were developed with older technologies that do not validate data well. Cloud-based systems, by contrast, are designed with interoperability in mind from the ground up.

Making that jump is not straightforward. Data migration, security, access controls and network policy alignment all need careful thought before any move can happen.

But Anantpurkar is firm that the transition cannot be avoided. Staying on old systems is not a safe choice either. It limits scalability and, increasingly, it locks organizations out of the AI capabilities that are becoming central to how businesses operate.

Agility is what modern systems are built for

Anantpurkar points to agility as the defining advantage of modern core systems, something legacy infrastructure simply cannot offer. These systems are designed for the cloud, driven by APIs, and capable of handling large and complex data across multiple devices and channels.

Better data validation and workflow flexibility mean development cycles get shorter and teams can respond to changing market needs without being held back by the system underneath them.

He also highlights AI-readiness as one of the most important features of modern systems. As AI evolves rapidly, having infrastructure that can support it across the enterprise is not a nice-to-have. It is what separates organizations that can operationalize AI from those that cannot.

Scale on demand, without the maintenance burden

Hybrid and multi-cloud models have given Manch Technologies the kind of scalability and flexibility that enterprise-level growth requires. Anantpurkar describes being able to grow or shrink infrastructure as needed, access better services and keep sensitive data secure, all through a cloud-native approach and the hyperscale providers they work with.

What also stands out for his teams is the reduction in infrastructure management and software maintenance. That overhead used to absorb a lot of time and energy. With it largely gone, teams are free to focus on work that actually moves the business forward.

Risk management is what holds a transition together

Any significant technology changeover brings its own set of risks, and moving to cloud-based systems is no different. Data privacy, cloud security, access controls and cyber threats all come into sharper focus during a transition like this.

Anantpurkar sees this as putting IT management and the CISO role at the centre of the process. Their job is to identify risks, document them and put the right policies and structures in place to address them.

His view is simple: without proper risk management, a transition of this scale cannot be done well. It is not a parallel track. It is part of the work itself.

The next few years will separate the ready from the rest

Looking ahead, Anantpurkar expects the next three to five years to be defined by AI-native platforms, growing cloud adoption, new pricing models and a much stronger focus on data, processes and governance.

For organizations that invest in modernization now, the payoff will be the ability to take full advantage of AI as it matures. For those that do not, aging infrastructure will make it increasingly hard to keep up with the pace at which AI is moving.

Modernizing at speed: Building organizations that continuously evolve

Most conversations about digital transformation tend to get stuck on the obstacles. Divyanshu Bhushan, Technology and Business Head for India & SEA at TO THE NEW, takes a different view.

For him, the more useful question is not whether modernization is hard, but how you approach it. Across areas like legacy infrastructure, cloud strategy, innovation velocity and risk, his perspective is consistent: the organizations that treat transformation as an ongoing discipline rather than a one-time event are the ones that come out ahead.

Legacy modernization: complexity worth embracing

Bhushan is clear that modernization should not be seen as a burden. It is one of the most valuable things an organization can do, even if it brings real challenges along the way. Technical debt, integration issues, skill gaps and resistance to change are all part of the picture.

The problem with legacy systems is how deeply rooted they are. Replacing them carries risk, and in many organizations, a large share of the IT budget still goes toward just keeping them running. That leaves little room for anything new.

His view is that modernization works best when it is treated as an ongoing process rather than a one-off project. The goal is to figure out what still adds value, what needs to be rebuilt and what should simply be let go. Organizations that get this right are the ones that make modernization part of how they operate, not something they do once and move on from.

Modern architecture cuts time-to-market in half

Bhushan believes modernization changes the way innovation actually happens inside a company. In older monolithic setups, even small updates take a lot of effort, which means slower releases and less room to experiment.

Newer approaches, particularly API-first and microservices-based systems paired with DevOps practices, let teams work independently and ship continuously. Organizations that have made this shift have seen time-to-market improve by as much as 50%.

But the bigger change, he says, is in mindset. Innovation stops being something that happens in planned cycles and becomes something that happens all the time. Teams stop waiting on infrastructure and start responding to what the business actually needs, when it needs it.

Cloud as a design principle, not a destination

For Bhushan, the conversation around cloud has moved on. The question is no longer which cloud to use, but which workload belongs where and why. That shift in thinking is what makes hybrid and multi-cloud strategies so useful.

Different workloads have different needs. Sensitive data can stay in controlled environments while high-demand, customer-facing applications scale on public cloud. The result is a setup that is more resilient, less dependent on any single vendor and better aligned with what the business actually requires.

It also means organizations can adapt as their needs change, without having to undo decisions made around a single platform or architecture.

Risk must be built in, not bolted on

Risk is unavoidable in large transformation programmes, but Bhushan thinks most organizations approach it the wrong way. Treating it as a final review or a compliance box to tick at the end rarely works. The better approach is to factor it in from the start.

That means weaving governance, security and monitoring into every phase of the work. Phased rollouts, continuous testing and solid observability practices all help keep systems stable while change is happening around them.

He also points to the human side. When leadership is visible, communication is clear and teams feel trusted, execution risk drops considerably. People who understand why something is changing and how they fit into it are much more likely to make it work.

The next frontier: AI, real-time data, and composable systems

Over the next few years, Bhushan expects architecture, data and intelligence to come together in ways that reshape what core systems can do. AI will stop being something added on top and will instead be woven into systems themselves, helping them adapt, optimise and make decisions on the fly. But that only becomes possible when the underlying data and platforms are modern enough to support it.

Platform engineering will also matter more than it does today. How easy it is for developers to build, test and ship will have a direct bearing on how fast a business can move. Alongside this, composable architectures built from modular, interchangeable parts will make it much easier to respond when the market shifts.

And real-time data will become the standard. Businesses will run on live information rather than reports that are already out of date by the time they are read.

Share your exclusive thoughts to:

editor@thefoundermedia.com